When individuals discuss synthetic intelligence, they normally deal with what it produces: Human-like textual content, gorgeous pictures, or eerily correct suggestions. What hardly ever will get consideration is how AI understands something within the first place. That understanding begins with encoders. Consider an encoder as a translator that converts messy, real-world data right into a structured language machines can work with.

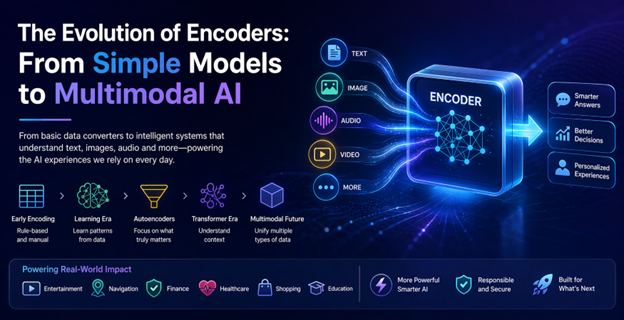

Over time, encoders have quietly advanced from easy information converters into subtle programs able to understanding a number of types of data directly. This transformation didn’t occur in a single day. It’s a narrative of gradual progress, sensible challenges, and breakthroughs pushed by real-world wants.

The start: When encoding was only a technical step

Within the early days of machine studying, encoding was extra of a technical necessity than an clever course of. Builders needed to manually resolve easy methods to characterize information. If a system wanted to know classes like “small,” “medium,” and “massive,” these labels needed to be transformed into numbers.

This labored, however solely to some extent. The system didn’t really perceive something; it simply processed numbers. For instance, an early on-line retailer may suggest merchandise primarily based on fundamental classes, but it surely couldn’t grasp refined relationships. Somebody shopping for trainers wouldn’t essentially be proven health watches or hydration gear until these hyperlinks have been explicitly programmed.

In brief, early encoders dealt with information, not which means.

Studying as an alternative of being instructed

Every little thing began to vary when neural networks entered the image. As an alternative of relying completely on human directions, programs started studying patterns instantly from information. Encoders turned greater than converters, they turned learners.

Take picture recognition as a real-world instance. As an alternative of telling a system what defines a cat’s ears, whiskers, tail builders may prepare it on 1000’s of pictures. The encoder would steadily work out patterns by itself. This variation made AI much more adaptable and correct.

The identical thought utilized to language. Phrases weren’t symbols; they turned vector mathematical representations capturing which means and relationships. That’s why trendy serps can perceive that “low cost flights” and “price range airfare” are intently associated, though the wording is completely different.

Autoencoders: Discovering what actually issues

A significant leap got here with the introduction of autoencoders. These fashions have been designed with a easy however highly effective thought: compress information after which reconstruct it. To do that efficiently, the encoder needed to establish what really mattered and ignore the whole lot else.

This method proved extremely helpful in real-world situations. In banking, as an example, autoencoders are used to detect fraud. By studying what “regular” behaviour appears to be like like, they’ll rapidly spot uncommon transactions. If somebody out of the blue makes a high-value buy in a distinct nation, the system flags it not as a result of it was instructed to, however as a result of it discovered that the behaviour is uncommon.

One other on a regular basis instance is photograph storage. Once you add pictures to a platform, encoders assist scale back file measurement whereas retaining vital particulars intact. That’s why pictures load rapidly with out wanting closely compressed.

The transformer Period: Context adjustments the whole lot

The actual turning level in encoder evolution got here with transformer fashions. What made them completely different was their potential to know context. As an alternative of processing data step-by-step, they have a look at the whole lot directly and resolve what issues most.

That is particularly vital in language. Take into account the sentence: “She noticed the person with the telescope.” Who has the telescope? Earlier fashions may battle with this ambiguity. Transformer-based encoders, nonetheless, analyse your entire sentence and make a extra knowledgeable interpretation.

This breakthrough powers many instruments individuals use every day. Once you work together with a chatbot, dictate a message, or translate textual content on-line, transformer encoders are working within the background. They make these interactions really feel pure, not mechanical.

Encoders in on a regular basis life

At present, encoders are in all places, even when most individuals don’t realise it. They form the best way we work together with expertise in refined however highly effective methods.

Streaming platforms use encoders to know viewing habits. If you happen to watch crime documentaries and psychological thrillers, the system doesn’t simply categorise your curiosity, it learns patterns and suggests content material that matches your style extra intently over time.

Navigation apps depend on encoders to course of site visitors information, street situations, and consumer behaviour. That’s how they’ll recommend sooner routes, generally even earlier than congestion turns into apparent.

In healthcare, encoders help docs by analysing medical pictures. They don’t exchange human judgement, however they’ll spotlight areas of concern, serving to professionals make faster and extra correct selections.

Multimodal encoders: Understanding a couple of kind of knowledge

The newest evolution in encoders is maybe probably the most thrilling: multimodal potential. As an alternative of working with only one kind of knowledge, these encoders can course of textual content, pictures and extra on the identical time.

This opens the door to experiences that really feel much more pure. Think about taking a photograph of a plant and asking your telephone easy methods to take care of it. A multimodal encoder can analyse the picture, perceive your query, and supply a helpful reply in seconds.

On-line procuring is one other space seeing fast enchancment. As an alternative of typing an outline, customers can add a picture of a product they like. The system then finds related objects, combining visible recognition with contextual understanding.

This potential to attach several types of data is pushing AI nearer to how people expertise the world.

Challenges that include progress

As encoders change into extra highly effective, additionally they change into extra demanding. Superior fashions require computing sources, which could be costly and energy-intensive. This raises vital questions on sustainability and accessibility.

Bias is one other concern. Since encoders be taught from information, they’ll mirror current inequalities. For instance, if a system is skilled on biased hiring information, it might unintentionally favour sure teams over others. Addressing this problem requires cautious information choice and steady oversight.

There’s additionally the matter of privateness. Encoders typically course of private data, making information safety an vital precedence. Placing the proper stability between innovation and accountability is an ongoing problem.

What lies forward

The way forward for encoders is much less about dramatic breakthroughs and extra about refinement. Researchers are engaged on making fashions sooner, extra environment friendly, and fewer resource-heavy. This might make superior AI instruments accessible to smaller companies and unbiased builders.

Personalisation is one other space of progress. Encoders could quickly adapt in actual time, studying from particular person customers to ship tailor-made experiences. In schooling, for instance, programs may alter content material primarily based on how a scholar learns finest, making classes simpler.

Multimodal programs can even proceed to enhance, mixing several types of information extra seamlessly. This might result in extra intuitive interfaces, the place interacting with expertise feels as pure as interacting with one other individual.

Conclusion: A quiet revolution with a huge impact

Encoders is probably not probably the most seen a part of synthetic intelligence, however they’re among the many most vital. Their evolution from easy information converters to clever, multimodal programs has reshaped what machines can do.

What makes this journey attention-grabbing is how intently it mirrors real-world wants. Every development wasn’t nearly higher expertise; it was about fixing sensible issues, understanding language, recognising pictures, detecting fraud, and bettering on a regular basis experiences.

As AI continues to develop, encoders will stay at its core, quietly remodeling uncooked data into significant perception. They could work behind the scenes, however their impression is unattainable to disregard.