Nvidia’s merchandise for knowledge facilities now embody a full stack with all of the items, mentioned Sandip Gupta, government managing director and head of world strategic alliances at NTT Knowledge. “From a buyer perspective, in the event that they consider in an built-in stack, it makes issues easy,” Gupta mentioned.

The built-in knowledge heart cuts complexity and improves effectivity throughout cooling, networking and storage. “It’s pushed by the sentiment of an enterprise on how dependent they wish to be on one supplier versus combine and match,” Gupta mentioned.

AI complexity has gone up manifold with multi-agent programs and technologies like OpenClaw, which Huang mentioned is as massive a deal as HTML and Linux. These applied sciences will generate tokens at an unprecedented tempo and pressure community, reminiscence and storage concurrently.

AI knowledge additionally has context, and transferring it inefficiently wastes energy and price. A brand new networking and storage layer is required to maneuver knowledge intelligently and effectively. A know-how referred to as KV Cache holds the contextual reminiscence crucial for processing agentic AI programs.

“It’s going to pound on reminiscence actually laborious… It’s going to be pounding on the storage system actually actually laborious, which is the explanation why we reinvented the storage system,” Huang mentioned.

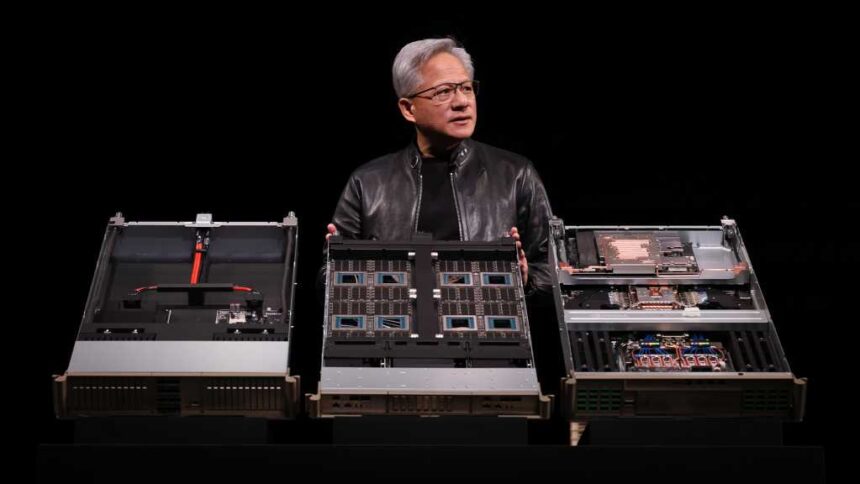

Nvidia’s blueprint turns knowledge facilities into one big AI GPU. It’s spearheaded by the GPU often called Rubin and CPU referred to as Vera, which had been introduced at GTC. Nvidia additionally slipped in a brand new inference chip; the Groq LPU has considerably extra reminiscence bandwidth than GPUs and is designed for low-latency token technology.