By Bruno Baloi – Lead Answer Technique, Synadia

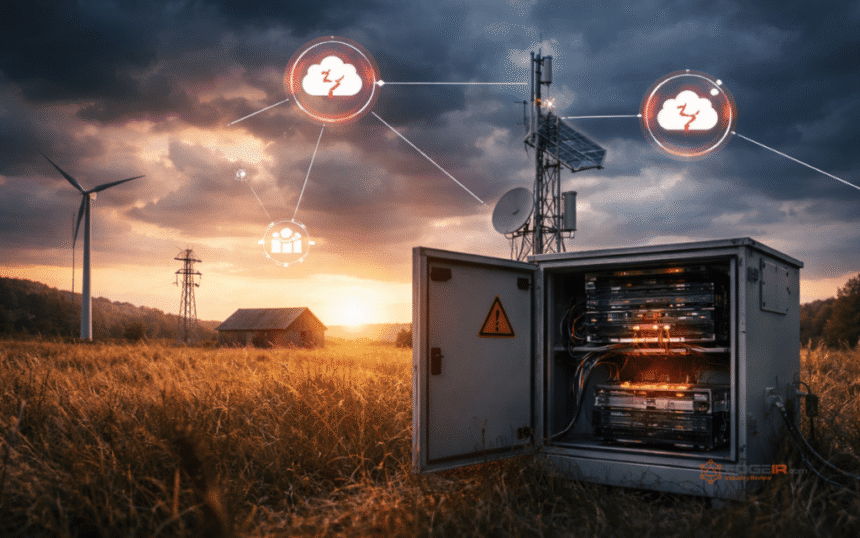

Edge computing has moved nicely previous the hype. Producers, power firms, healthcare suppliers, and fleet operators make giant investments in fashionable edge functions, gadgets, and techniques. Some are studying the arduous approach that the design ideas that work in a knowledge middle typically break down on the edge.

The issue isn’t compute capability or storage; the issue is that almost all edge architectures are nonetheless designed round an assumption of dependable connectivity. Beginning with that assumption is destined for system failure.

Edge computing tends to be considered a geographic downside. You progress the workloads “nearer” to information sources. That framing understates the true challenges.

The sting is a essentially totally different working atmosphere.

In a knowledge middle, you management the infrastructure, personal the community, the facility, and the bodily safety. On the edge (on a manufacturing unit flooring, a drilling rig, a car, or a distant power set up) you management little or no of that and connectivity is intermittent. You additionally run into system tampering, constrained bandwidth, and in a number of use instances a failed message could be a important downside.

We’ve recognized 4 problem patterns that any severe edge structure should deal with: connectivity, safety, distribution, and observability. Ignoring any of those challenges might result in actual complications.

The most typical mistake in edge-to-core system design is treating connectivity loss as an exception. Intermittent connectivity is the norm. In case your structure requires a dwell connection to operate accurately, you’ve additionally constructed your first fragile, failure level.

Retailer and ahead

Edge programs acquire and buffer occasions regionally, then ahead them when connection is restored or accessible. These edge programs catch up, safely, after outages with out information loss and with out handbook intervention. Designing for disconnection first forces you to make your edge programs genuinely autonomous, which in flip makes them extra resilient.

Deal with edge and core as separate realms

Edge volatility resembling surging visitors, intermittent nodes, or potential tampering shouldn’t be allowed to propagate into core programs. The 2 domains have totally different traits, totally different belief ranges, and totally different failure modes. For this reason separating your methods for edge versus core programs is necessary. Separation means greater than community segmentation. Your edge-to-core system ought to be constructed with:

- Totally different safety realms and tightly scoped credentials,

- Constrained boundary paths that management precisely what topics or channels cross between edge and core,

- And a transparent division of accountability.

Edge programs do native filtering, inference, and rapid choice loops; whereas core programs deal with long-lived workflows, deep analytics, and world coordination.

Stream management is just not non-compulsory

When 1000’s of sensors are producing steady telemetry, a single aggregated information stream can rapidly turn into a firehose. You don’t need your core programs to get overwhelmed.

Stream management is the way you successfully handle edge-to-core information pipelines by filtering, mapping, shaping, and routing occasions. As an alternative of subscribing to all the things and filtering in utility code, shoppers ought to be capable of specific intent. This reduces complexity, lowers infrastructure value, and makes routing coverage auditable and versatile with out redeploying companies.

Observability is what makes scaling secure

The structure shift is going on now

Profitable edge computing organizations aren’t those with essentially the most gadgets, they’re those which have internalized these ideas and constructed architectures that embrace, slightly than struggle, the realities of distributed edge operations.

For edge-to-core programs, essentially the most precious funding you may make is within the eventing layer – the connective tissue.

Getting connectivity design proper determines whether or not your edge infrastructure is a aggressive benefit or a legal responsibility.

For a deeper technical remedy of those patterns together with clustering fashions, streaming topologies, and motion patterns the white paper Living on the Edge: Eventing for a New Dimension is well worth the learn.

In regards to the creator

Bruno Baloi – Lead Answer Technique, Synadia is a tireless innovator and a seasoned know-how administration skilled. As an innovator, I typically take unorthodox routes with a view to arrive on the optimum resolution/design. By bringing collectively various area data and experience I all the time attempt to take a look at issues from a number of angles and observe a philosophy of constructing design a lifestyle. I’ve managed geographically distributed improvement and area groups, and have instituted collaboration, and data sharing as a core tenet. I all the time fostered a tradition based firmly on ideas of accountability and creativity, thereby engendering a strategy of steady development and innovation. Intensive technical experience in software program structure and design with a concentrate on: distributed architectures, data concept, complicated occasion processing, data illustration, machine studying, integration, APIs/microservices, low latency messaging, and IoT.

Associated

Article Matters

edge computing | edge infrastructure | IoT edge computing | Synadia