Utilizing generative synthetic intelligence, a workforce of researchers at The College of Texas at Austin has transformed sounds from audio recordings into street-view photos. The visible accuracy of those generated photos demonstrates that machines can replicate human connection between audio and visible notion of environments.

In a paper published in Computer systems, Surroundings and City Methods, the analysis workforce describes coaching a soundscape-to-image AI mannequin utilizing audio and visible information gathered from quite a lot of city and rural streetscapes after which utilizing that mannequin to generate photos from audio recordings.

“Our examine discovered that acoustic environments comprise sufficient visible cues to generate extremely recognizable streetscape photos that precisely depict totally different locations,” stated Yuhao Kang, assistant professor of geography and the surroundings at UT and co-author of the examine. “This implies we will convert the acoustic environments into vivid visible representations, successfully translating sounds into sights.”

Utilizing YouTube video and audio from cities in North America, Asia and Europe, the workforce created pairs of 10-second audio clips and picture stills from the assorted places and used them to coach an AI mannequin that might produce high-resolution photos from audio enter. They then in contrast AI sound-to-image creations made out of 100 audio clips to their respective real-world images, utilizing each human and laptop evaluations.

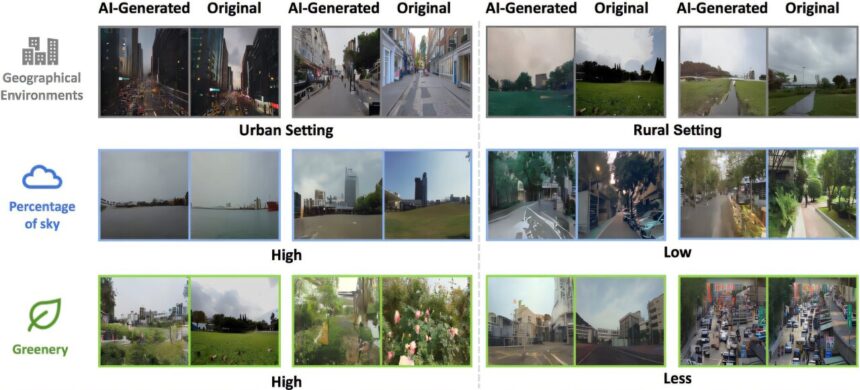

Pc evaluations in contrast the relative proportions of greenery, constructing and sky between supply and generated photos, whereas human judges have been requested to appropriately match certainly one of three generated photos to an audio pattern.

The outcomes confirmed robust correlations within the proportions of sky and greenery between generated and real-world photos and a barely lesser correlation in constructing proportions. And human contributors averaged 80% accuracy in choosing the generated photos that corresponded to supply audio samples.

“Historically, the power to examine a scene from sounds is a uniquely human functionality, reflecting our deep sensory reference to the surroundings. Our use of superior AI strategies supported by massive language fashions (LLMs) demonstrates that machines have the potential to approximate this human sensory expertise,” Kang stated.

“This means that AI can lengthen past mere recognition of bodily environment to probably enrich our understanding of human subjective experiences at totally different locations.”

Along with approximating the proportions of sky, greenery and buildings, the generated photos usually maintained the architectural types and distances between objects of their real-world picture counterparts, in addition to precisely reflecting whether or not soundscapes have been recorded throughout sunny, cloudy or nighttime lighting circumstances.

The authors word that lighting info may come from variations in exercise within the soundscapes. For instance, site visitors sounds or the chirping of nocturnal bugs may reveal time of day. Such observations additional the understanding of how multisensory elements contribute to our expertise of a spot.

“While you shut your eyes and pay attention, the sounds round you paint footage in your thoughts,” Kang stated. “As an illustration, the distant hum of site visitors turns into a bustling cityscape, whereas the light rustle of leaves ushers you right into a serene forest. Every sound weaves a vivid tapestry of scenes, as if by magic, within the theater of your creativeness.”

Kang’s work focuses on utilizing geospatial AI to check the interplay of people with their environments. In one other recent paper revealed in Humanities and Social Sciences Communications, he and his co-authors examined the potential of AI to seize the traits that give cities their distinctive identities.

Extra info:

Yonggai Zhuang et al, From listening to to seeing: Linking auditory and visible place perceptions with soundscape-to-image generative synthetic intelligence, Computer systems, Surroundings and City Methods (2024). DOI: 10.1016/j.compenvurbsys.2024.102122

Kee Moon Jang et al, Place identification: a generative AI’s perspective, Humanities and Social Sciences Communications (2024). DOI: 10.1057/s41599-024-03645-7

Quotation:

Utilizing AI to show sound recordings into correct road photos (2024, November 27)

retrieved 27 November 2024

from https://techxplore.com/information/2024-11-ai-accurate-street-images.html

This doc is topic to copyright. Aside from any honest dealing for the aim of personal examine or analysis, no

half could also be reproduced with out the written permission. The content material is offered for info functions solely.