Neural Radiance Fields (NeRF) is an enchanting method that creates three-dimensional (3D) representations of a scene from a set of two-dimensional (2D) pictures, captured from completely different angles. It really works by coaching a deep neural community to foretell the colour and density at any level in 3D area.

To do that, it casts imaginary mild rays from the digicam by means of every pixel in all enter pictures, sampling factors alongside these rays with their 3D coordinates and viewing course. Utilizing this info, NeRF reconstructs the scene in 3D and might render it from solely new views, a course of referred to as novel view synthesis (NVS).

Past nonetheless pictures, a video may also be used, with every body of the video handled as a static picture. Nonetheless, present strategies are extremely delicate to the standard of the movies.

Movies captured with a single digicam, corresponding to these from a cellphone or drone, inevitably undergo from movement blur attributable to quick object movement or digicam shake. This makes it troublesome to create sharp, dynamic NVS. It’s because most present deblurring-based NVS strategies are designed for static multi-view pictures, which fail to account for international digicam and native object movement. As well as, blurry movies typically result in inaccurate digicam pose estimations and lack of geometric precision.

To handle these points, a analysis staff collectively led by Assistant Professor Jihyong Oh from the Graduate Faculty of Superior Imaging Science (GSIAM) at Chung-Ang College (CAU) in Korea, and Professor Munchurl Kim from Korea Superior Institute of Science and Expertise (KAIST), Korea, together with Mr. Minh-Quan Viet Bui, Mr. Jongmin Park, developed MoBluRF, a two-stage movement deblurring technique for NeRFs.

“Our framework is able to reconstructing sharp 4D scenes and enabling NVS from blurry monocular movies utilizing movement decomposition, whereas avoiding masks supervision, considerably advancing the NeRF discipline,” explains Dr. Oh. Their research is printed in IEEE Transactions on Pattern Analysis and Machine Intelligence.

MoBluRF consists of two principal levels: Base Ray Initialization (BRI) and Movement Decomposition-based Deblurring (MDD). Present deblurring-based NVS strategies try and predict hidden sharp mild rays in blurry pictures, referred to as latent sharp rays, by reworking a ray referred to as the bottom ray. Nonetheless, instantly utilizing enter rays in blurry pictures as base rays can result in inaccurate prediction. BRI addresses this difficulty by roughly reconstructing dynamic 3D scenes from blurry movies and refining the initialization of “base rays” from imprecise digicam rays.

Subsequent, these base rays are used within the MDD stage to precisely predict latent sharp rays by means of an Incremental Latent Sharp-rays Prediction (ILSP) technique. ILSP incrementally decomposes movement blur into international digicam movement and native object movement elements, significantly bettering the deblurring accuracy. MoBluRF additionally introduces two novel loss features, one which separates static and dynamic areas with out movement masks, and one other that improves geometric accuracy of dynamic objects, two areas the place earlier strategies struggled.

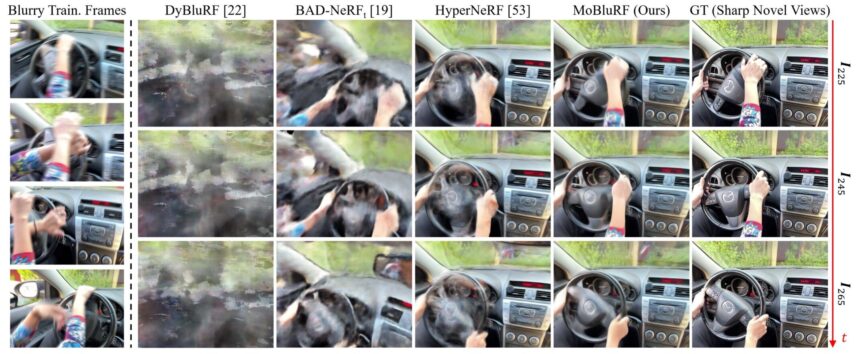

Owing to this modern design, MoBluRF outperforms state-of-the-art strategies with important margins in varied datasets, each quantitatively and qualitatively. Additionally it is strong towards various levels of blur.

“By enabling deblurring and 3D reconstruction from informal handheld captures, our framework allows smartphones and different shopper units to provide sharper and extra immersive content material,” remarks Dr. Oh. “It may additionally assist create crisp 3D fashions of shaky footages from museums, enhance scene understanding and security for robots and drones, and scale back the necessity for specialised seize setups in digital and augmented actuality.”

MoBluRF marks a brand new course for NeRFs, enabling high-quality 3D reconstructions from atypical blurry movies recorded with on a regular basis units.

Extra info:

Minh-Quan Viet Bui et al, MoBluRF: Movement Deblurring Neural Radiance Fields for Blurry Monocular Video, IEEE Transactions on Sample Evaluation and Machine Intelligence (2025). DOI: 10.1109/tpami.2025.3574644

Offered by

Chung Ang College

Quotation:

Two-stage framework reconstructs sharp 4D scenes from blurry handheld movies (2025, September 19)

retrieved 20 September 2025

from https://techxplore.com/information/2025-09-stage-framework-reconstructs-sharp-4d.html

This doc is topic to copyright. Other than any truthful dealing for the aim of personal research or analysis, no

half could also be reproduced with out the written permission. The content material is offered for info functions solely.