Tag: Inference

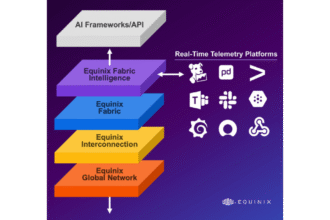

Equinix expands platform for distributed AI as inference moves closer to the edge

Equinix unveiled its Distributed AI infrastructure at its inaugural AI Summit, designed to assist the following wave of…

Nvidia targets inference as AI’s next battleground with Groq 3 LPX

It’s a giant value play, he identified, and it “has to occur in every single place, on a…

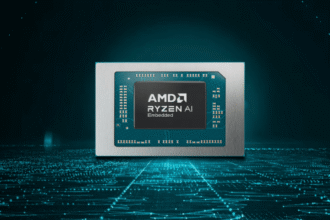

AMD targets industrial edge AI with new Ryzen embedded chips built for real-time inference

AMD has launched its Ryzen AI Embedded P100 Series processors, delivering scalable and environment friendly AI compute (AI-on-Chip)…

Why the future of AI inference lies at the edge

By Stephane Henry, Group VP of Edge AI Options at STMicroelectronics, AI is turning into a transformative power…

Gcore adds NVIDIA Dynamo to boost GPU efficiency and cut AI inference latency

Edge AI options supplier Gcore has built-in NVIDIA Dynamo into its AI inference options, providing as much as…

Arrcus targets AI inference bottleneck with policy-aware network fabric

“Switching is basically an easier operation. You simply sort of ship a packet or not,” Ayyar defined. “Routing…

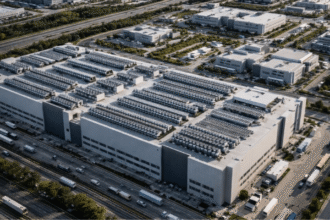

AI inference moves closer to the grid as smaller data centers take shape

EPRI, NVIDIA, Prologis and InfraPartners have revealed they're working collectively to create smaller scale (5-20MW) distributed knowledge facilities…

Nvidia claims 10x cost savings with open-source inference models

Nvidia famous that price per token went from 20 cents on the older Hopper platform to 10 cents…

Nokia and Blaize sign edge AI inference MOU targeting APAC networks

Nokia and AI-enabled edge computing chip firm Blaize signed a strategic Memorandum of Understanding (MOU) to introduce edge…

Where AI inference will land: The enterprise IT equation

By Amir Khan, President, CEO & Founding father of Alkira For know-how leaders within the enterprise, the query…

Microsoft launches its second generation AI inference chip, Maia 200

“In sensible phrases, Maia 200 can effortlessly run right this moment’s largest fashions, with loads of headroom for…

OpenAI turns to Cerebras in a mega deal to scale AI inference infrastructure

Analysts anticipate AI workloads to develop extra diversified and extra demanding within the coming years, driving the necessity…