Judy Hill, Deputy for Excessive Efficiency Computing at Lawrence Livermore Nationwide Laboratory (LLNL), shares a take a look at the Livermore Computing high-performance computing centre and the groundbreaking work going down there.

Excessive-performance computing (HPC) allows discovery and innovation by means of the extraordinary simulations it makes potential. HPC is now excessive on the checklist of priorities for the US, harnessing its potential to save lots of vitality, cut back emissions, enhance competitiveness, and strengthen the nation’s place as a worldwide know-how chief. At U.S Division of Power (DOE) amenities similar to Lawrence Livermore Nationwide Laboratory (LLNL), HPC has develop into the ‘third pillar’ of analysis, becoming a member of concept and experiment as an equal companion.

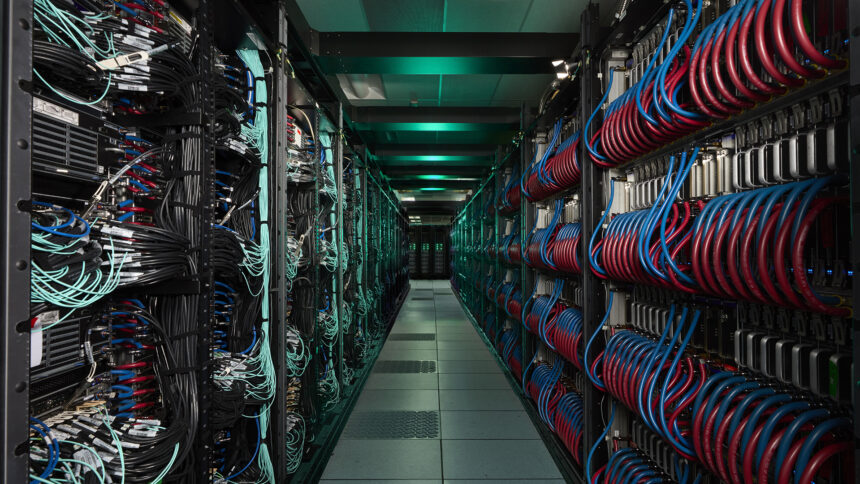

LLNL’s premier HPC centre, Livermore Computing, delivers techniques, instruments, and experience to assist the development of HPC capabilities. The centre’s missions are threefold:

To be taught extra in regards to the work going down at Livermore Computing and the potential this has for a variety of real-world functions, The Innovation Platform spoke to LLNL’s Deputy for Excessive Efficiency Computing, Judy Hill.

Are you able to briefly elaborate on how LLNL is contributing to the HPC panorama within the US and globally?

LLNL has been a frontrunner in HPC for many years, relationship again to the laboratory’s founding. The Lab’s first main buy in 1953 was a pc – the UNIVAC 1 – and since then, computing has been central to our mission. That legacy continues immediately, as we push the boundaries in a number of necessary methods.

First, there’s the {hardware} itself. We’ve lately introduced El Capitan – the quickest benchmarked supercomputer on this planet – on-line, attaining exascale efficiency with practically two exaFLOPs (two quintillion calculations per second) of realised computing energy. Earlier than that, we operated Sierra and Lassen, which have been important techniques for the whole lot from weapons simulations to supplies science discovery to superior synthetic intelligence (AI) and machine studying analysis. These aren’t merely spectacular machines – they’re instruments that allow us to sort out issues we couldn’t remedy earlier than.

To area these superior supercomputers, we work intently with corporations like HPE, AMD, NVIDIA, and Intel in co-design partnerships. We collaboratively design these techniques to satisfy the precise wants of our functions. For instance, the AMD MI300As in El Capitan are accelerated processing items (APUs) that mix central processing unit (CPU) and graphic processing unit (GPU) capabilities on a single bundle utilizing shared reminiscence. This design resulted from direct enter about our workloads, the place we wanted tight integration between a CPU for general-purpose computing and an accelerator like a GPU, however with out the bottlenecks of separate chips.

One other necessary a part of our affect comes by means of our open-source software program growth. We’ve launched dozens of initiatives on GitHub that the broader HPC group depends on, together with Spack for bundle administration, Flux for workload administration, and plenty of others. After we remedy an issue for our techniques, we share that resolution so others can profit. This has develop into a cornerstone of how we contribute to the broader HPC ecosystem.

Lastly, we’re investing in folks. We’re coaching the following technology of computational scientists by means of summer season applications and academic initiatives, and we’re sharing what we be taught with the broader group. It’s all a part of conserving the US on the forefront of computing whereas guaranteeing we’re fixing necessary issues that matter.

What are the principle priorities for Livermore Computing at current and the way do these match with nationwide and worldwide objectives for HPC?

Our priorities span each near-term operational challenges and longer-term strategic initiatives. Within the close to time period, we’re centered on enabling our customers to effectively make use of El Capitan. Past optimising their software codes, this contains enabling the combination of AI capabilities into their workflows. Wanting additional forward, we stand on the precipice of a basic shift in scientific computing and HPC. We consider that, sooner or later, customers will count on to work together with an HPC surroundings in a method that’s just like a cloud surroundings, moderately than our historic monolithic batch-scheduled techniques.

Environment friendly operation and use of El Capitan

With El Capitan now operational, our essential precedence is to proceed to optimise its efficiency and guarantee functions can totally utilise its capabilities. This implies tuning vital codes for the MI300A structure, creating reminiscence fashions that effectively leverage the distinctive APU reminiscence design, and maximising system utilisation and reliability.

AI and machine studying integration

AI is all over the place, and it’s no totally different at Livermore Computing. As a part of the Division of Power’s Genesis Mission, we’re quickly enabling AI capabilities to reinforce our software builders’ productiveness when writing software program for El Capitan and our different techniques. We’re additionally utilizing machine-learned fashions to speed up physics simulations, creating surrogate fashions that may substitute costly calculations, and making use of AI to knowledge evaluation from experiments and simulations.

Evolving to the HPC Heart of the Future

We’re additionally ‘what’s subsequent’ for HPC. By way of system architectures, we’re already working with know-how distributors on the following technology of reminiscence subsystems and interconnects that can be vital for future supercomputers. We proceed in lively dialogue with chip designers to emphasize the capabilities that our functions require as they consider future CPU, GPU, and different accelerator designs. However the actual transformation is more likely to be the convergence of cloud methodologies with the HPC ecosystem. Customers don’t essentially care on which system their issues are solved, solely that they get appropriate solutions on a identified timescale. We’re engaged on reworking the HPC ecosystem to be extra ‘cloud-like’, offering an improved total expertise for the top person.

What are the principle challenges threatening innovation in HPC? How can these be overcome?

The HPC panorama is evolving quickly, presenting each thrilling alternatives and important challenges that may form the longer term. In HPC, we have now traditionally overcome obstacles by leveraging broader trade developments, such because the shift from vector processors to commodity clusters, the rise of GPU acceleration, and now the present convergence with cloud computing. This potential to adapt and innovate stays a core power as we navigate the challenges forward.

The AI revolution and useful resource competitors

The explosive development of AI and machine studying presents each alternatives and threats to extra conventional HPC. Whereas AI methods can improve modelling and simulation by means of surrogate fashions and improved knowledge evaluation, the huge business funding in AI is reshaping the computing panorama in methods that will not align with our (HPC) wants. GPU producers are more and more optimising their merchandise for AI coaching and inference workloads, which may make use of lower-precision arithmetic, moderately than conventional scientific computing, which frequently requires double-precision floating level efficiency. The big demand for AI compute can be driving up prices and creating provide constraints for high-end accelerators. This can be a case the place, once more, HPC should adapt to the broader trade developments by alternatives combined precision algorithms may afford and increasing our dialog and use circumstances to be ‘HPC and AI’ moderately than ‘HPC or AI’.

The cloud computing paradigm shift

Whereas cloud computing gives many benefits, integrating it with conventional HPC presents challenges. The economics of cloud computing don’t all the time favour the sustained, high-utilisation workloads typical of most HPC simulation campaigns. There are additionally cultural and workflow variations between conventional HPC customers and cloud-native approaches. Our envisioned HPC Heart of the Future is a hybrid mannequin that mixes the most effective of each worlds: an on-premises infrastructure with cloud-like interfaces and capabilities, with doubtlessly selective use of economic clouds for applicable workloads. At LLNL, we’re actively engaged on this transformation, recognising that the way forward for HPC possible entails a spectrum of computing assets moderately than a single monolithic system.

Workforce challenges

The HPC ecosystem has develop into more and more complicated, and there’s a vital scarcity of individuals with the specialised abilities wanted. The way forward for modelling and simulation contains not simply conventional HPC experience but additionally a deep information of AI/ML, superior programming fashions, and rising architectures. Universities aren’t producing sufficient graduates with these abilities, and competitors for people with this breadth of information is intense. We have to spend money on training and coaching applications that put together the following technology of HPC professionals, and we have to appeal to people to these applications by highlighting the necessary scientific issues that may solely be solved with HPC assets.

What are a number of the main discoveries or improvements that LLNL’s HPC capabilities have facilitated or supported?

LLNL’s HPC capabilities have pushed breakthroughs in each its nationwide safety mission area and, extra broadly, in a wide range of scientific disciplines starting from supplies science to fusion to astrophysics. Examples embrace:

Stockpile modernisation

As a part of NNSA’s core nuclear safety mission, LLNL contributes to the modernisation of the nuclear stockpile, guaranteeing its security, safety, and reliability with out counting on underground nuclear testing. HPC allows complicated, high-fidelity multiphysics simulations of weapons efficiency, ageing, and supplies below excessive situations — modelling the whole lot from microsecond nuclear reactions to decades-long degradation. Techniques like El Capitan ship higher-fidelity simulations that enhance stockpile assessments.

Inertial confinement fusion and the Nationwide Ignition Facility

LLNL achieved a historic breakthrough in December 2022 when the Nationwide Ignition Facility demonstrated fusion ignition, producing extra vitality from fusion than the laser vitality delivered to the goal. This achievement required a long time of HPC-enabled analysis to mannequin the complicated physics of laser-driven fusion, together with radiation transport, hydrodynamics, and plasma physics. HPC continues to play an important position in designing the NIF targets, optimising laser pulse shapes, and understanding experimental outcomes. Most lately, the Inertial Confinement on El Capitan (ICECap) challenge has been creating an AI-driven workflow, constructed on prime of large-scale multiphysics simulations, to optimise the design of the ignition capsule and hohlraum, which make up the fusion goal.

COVID, GUIDE, and computational biology

Throughout the COVID-19 pandemic, LLNL quickly pivoted its HPC assets to assist the nationwide response. Scientists used LLNL HPC machines to screen millions of potential antibody variants against SARS-CoV-2 proteins, figuring out promising therapeutic candidates. This AI-plus-HPC workflow allowed researchers to narrow that vast space to just 376 top computational designs for experimental testing, dramatically accelerating the redesign course of in comparison with earlier laboratory-only discovery. The work continues to evolve into initiatives like GUIDE, during which researchers are demonstrating how HPC+AI may help redesign and restore the effectiveness of antibody therapeutics whose potential to battle viruses has been compromised by viral evolution.

Please word, this text can even seem within the twenty fifth version of our quarterly publication.