Be a part of our each day and weekly newsletters for the newest updates and unique content material on industry-leading AI protection. Study Extra

The whale has returned.

After rocking the worldwide AI and enterprise neighborhood early this 12 months with the January 20 preliminary launch of its hit open supply reasoning AI mannequin R1, the Chinese language startup DeepSeek — a by-product of previously solely domestically well-known Hong Kong quantitative evaluation agency Excessive-Flyer Capital Administration — has launched DeepSeek-R1-0528, a major replace that brings DeepSeek’s free and open mannequin close to parity in reasoning capabilities with proprietary paid fashions corresponding to OpenAI’s o3 and Google Gemini 2.5 Professional

This replace is designed to ship stronger efficiency on complicated reasoning duties in math, science, enterprise and programming, together with enhanced options for builders and researchers.

Like its predecessor, DeepSeek-R1-0528 is out there beneath the permissive and open MIT License, supporting business use and permitting builders to customise the mannequin to their wants.

Open-source mannequin weights are available via the AI code sharing community Hugging Face, and detailed documentation is supplied for these deploying domestically or integrating by way of the DeepSeek API.

Current customers of the DeepSeek API will routinely have their mannequin inferences up to date to R1-0528 at no further value. The present value for DeepSeek’s API is

For these trying to run the mannequin domestically, DeepSeek has printed detailed directions on its GitHub repository. The corporate additionally encourages the neighborhood to offer suggestions and questions by their service e-mail.

Particular person customers can attempt it totally free by DeepSeek’s web site right here, although you’ll want to offer a telephone quantity or Google Account entry to check in.

Enhanced reasoning and benchmark efficiency

On the core of the replace are important enhancements within the mannequin’s means to deal with difficult reasoning duties.

DeepSeek explains in its new mannequin card on HuggingFace that these enhancements stem from leveraging elevated computational sources and making use of algorithmic optimizations in post-training. This method has resulted in notable enhancements throughout varied benchmarks.

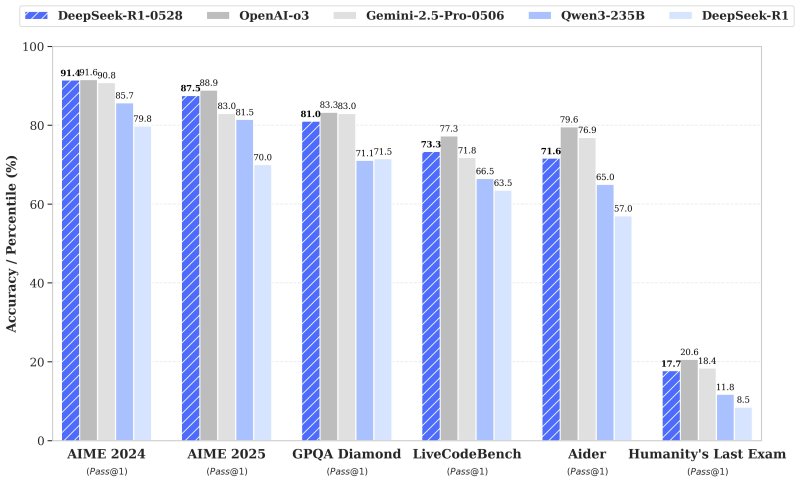

Within the AIME 2025 take a look at, as an illustration, DeepSeek-R1-0528’s accuracy jumped from 70% to 87.5%, indicating deeper reasoning processes that now common 23,000 tokens per query in comparison with 12,000 within the earlier model.

Coding efficiency additionally noticed a lift, with accuracy on the LiveCodeBench dataset rising from 63.5% to 73.3%. On the demanding “Humanity’s Final Examination,” efficiency greater than doubled, reaching 17.7% from 8.5%.

These advances put DeepSeek-R1-0528 nearer to the efficiency of established fashions like OpenAI’s o3 and Gemini 2.5 Professional, based on inside evaluations — each of these fashions both have price limits and/or require paid subscriptions to entry.

UX upgrades and new options

Past efficiency enhancements, DeepSeek-R1-0528 introduces a number of new options geared toward enhancing the consumer expertise.

The replace provides assist for JSON output and performance calling, options that ought to make it simpler for builders to combine the mannequin’s capabilities into their purposes and workflows.

Entrance-end capabilities have additionally been refined, and DeepSeek says these adjustments will create a smoother, extra environment friendly interplay for customers.

Moreover, the mannequin’s hallucination price has been diminished, contributing to extra dependable and constant output.

One notable replace is the introduction of system prompts. In contrast to the earlier model, which required a particular token initially of the output to activate “pondering” mode, this replace removes that want, streamlining deployment for builders.

Smaller variants for these with extra restricted compute budgets

Alongside this launch, DeepSeek has distilled its chain-of-thought reasoning right into a smaller variant, DeepSeek-R1-0528-Qwen3-8B, which ought to assist these enterprise decision-makers and builders who don’t have the {hardware} essential to run the complete

This distilled model reportedly achieves state-of-the-art efficiency amongst open-source fashions on duties corresponding to AIME 2024, outperforming Qwen3-8B by 10% and matching Qwen3-235B-thinking.

In response to Modal, operating an 8-billion-parameter massive language mannequin (LLM) in half-precision (FP16) requires roughly 16 GB of GPU reminiscence, equating to about 2 GB per billion parameters.

Due to this fact, a single high-end GPU with no less than 16 GB of VRAM, such because the NVIDIA RTX 3090 or 4090, is enough to run an 8B LLM in FP16 precision. For additional quantized fashions, GPUs with 8–12 GB of VRAM, just like the RTX 3060, can be utilized.

DeepSeek believes this distilled mannequin will show helpful for educational analysis and industrial purposes requiring smaller-scale fashions.

Preliminary AI developer and influencer reactions

The replace has already drawn consideration and reward from builders and fans on social media.

Haider aka “@slow_developer” shared on X that DeepSeek-R1-0528 “is simply unimaginable at coding,” describing the way it generated clear code and dealing assessments for a phrase scoring system problem, each of which ran completely on the primary attempt. In response to him, solely o3 had beforehand managed to match that efficiency.

In the meantime, Lisan al Gaib posted that “DeepSeek is aiming for the king: o3 and Gemini 2.5 Professional,” reflecting the consensus that the brand new replace brings DeepSeek’s mannequin nearer to those prime performers.

One other AI information and rumor influencer, Chubby, commented that “DeepSeek was cooking!” and highlighted how the brand new model is sort of on par with o3 and Gemini 2.5 Professional.

Chubby even speculated that the final R1 replace may point out that DeepSeek is making ready to launch its long-awaited and presumed “R2” frontier mannequin quickly, as effectively.

Trying Forward

The discharge of DeepSeek-R1-0528 underscores DeepSeek’s dedication to delivering high-performing, open-source fashions that prioritize reasoning and usefulness. By combining measurable benchmark good points with sensible options and a permissive open-source license, DeepSeek-R1-0528 is positioned as a helpful software for builders, researchers, and fans trying to harness the newest in language mannequin capabilities.

Let me know in case you’d like so as to add any extra quotes, modify the tone additional, or spotlight further parts!

Source link