Don’t miss OpenAI, Chevron, Nvidia, Kaiser Permanente, and Capital One leaders solely at VentureBeat Rework 2024. Acquire important insights about GenAI and develop your community at this unique three day occasion. Be taught Extra

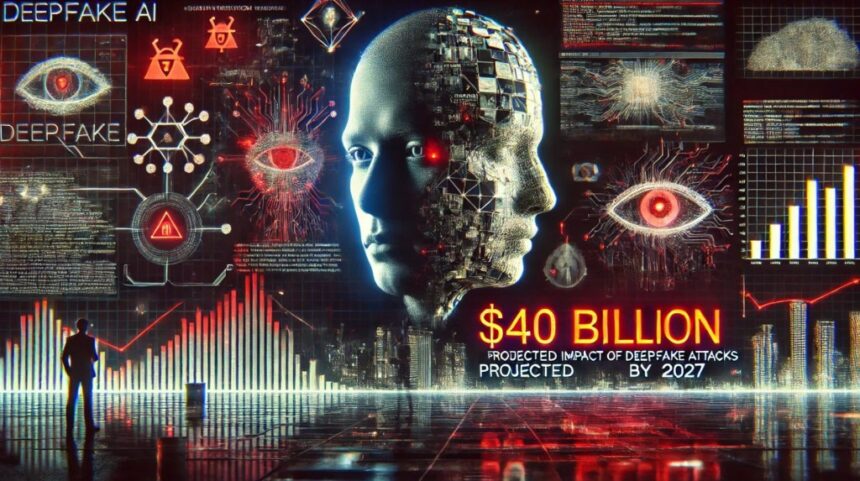

Now one of many fastest-growing types of adversarial AI, deepfake-related losses are anticipated to soar from $12.3 billion in 2023 to $40 billion by 2027, rising at an astounding 32% compound annual development price. Deloitte sees deep fakes proliferating within the years forward, with banking and monetary companies being a major goal.

Deepfakes typify the reducing fringe of adversarial AI assaults, attaining a 3,000% increase final 12 months alone. It’s projected that deep pretend incidents will go up by 50% to 60% in 2024, with 140,000-150,000 cases globally predicted this 12 months.

The newest era of generative AI apps, instruments and platforms offers attackers with what they should create deep pretend movies, impersonated voices, and fraudulent paperwork shortly and at a really low price. Pindrops’ 2024 Voice Intelligence and Security Report estimates that deep pretend fraud aimed toward contact facilities is costing an estimated $ 5 billion yearly. Their report underscores how extreme a menace deep pretend know-how is to banking and monetary companies

Bloomberg reported final 12 months that “there’s already a whole cottage trade on the darkish net that sells scamming software program from $20 to 1000’s of {dollars}.” A latest infographic primarily based on Sumsub’s Identity Fraud Report 2023 offers a world view of the speedy development of AI-powered fraud.

Countdown to VB Rework 2024

Be part of enterprise leaders in San Francisco from July 9 to 11 for our flagship AI occasion. Join with friends, discover the alternatives and challenges of Generative AI, and discover ways to combine AI purposes into your trade. Register Now

Supply: Statista, How Dangerous are Deepfakes and Other AI-Powered Fraud? March 13, 2024

Enterprises aren’t ready for deepfakes and adversarial AI

Adversarial AI creates new assault vectors nobody sees coming and creates a extra complicated, nuanced threatscape that prioritizes identity-driven assaults.

Unsurprisingly, one in three enterprises don’t have a method to handle the dangers of an adversarial AI assault that might almost certainly begin with deepfakes of their key executives. Ivanti’s newest analysis finds that 30% of enterprises haven’t any plans for figuring out and defending in opposition to adversarial AI assaults.

The Ivanti 2024 State of Cybersecurity Report discovered that 74% of enterprises surveyed are already seeing proof of AI-powered threats. The overwhelming majority, 89%, consider that AI-powered threats are simply getting began. Of nearly all of CISOs, CIOs and IT leaders Ivanti interviewed, 60% are afraid their enterprises will not be ready to defend in opposition to AI-powered threats and assaults. Utilizing a deepfake as a part of an orchestrated technique that features phishing, software program vulnerabilities, ransomware and API-related vulnerabilities is turning into extra commonplace. This aligns with the threats safety professionals count on to develop into extra harmful because of gen AI.

Supply: Ivanti 2024 State of Cybersecurity Report

Attackers focus deep pretend efforts on CEOs

VentureBeat commonly hears from enterprise software program cybersecurity CEOs preferring to remain nameless about how deepfakes have progressed from simply recognized fakes to latest movies that look authentic. Voice and video deepfakes look like a favourite assault technique of trade executives, aimed toward defrauding their corporations of hundreds of thousands of {dollars}. Including to the menace is how aggressively nation-states and large-scale cybercriminal organizations are doubling down on growing, hiring and rising their experience with generative adversarial network (GAN) applied sciences. Of the 1000’s of CEO deepfake makes an attempt which have occurred this 12 months alone, the one concentrating on the CEO of the world’s biggest ad firm exhibits how subtle attackers have gotten.

In a latest Tech News Briefing with the Wall Street Journal, CrowdStrike CEO George Kurtz defined how enhancements in AI are serving to cybersecurity practitioners defend programs whereas additionally commenting on how attackers are utilizing it. Kurtz spoke with WSJ reporter Dustin Volz about AI, the 2024 U.S. election, and threats posed by China and Russia.

“The deepfake know-how at present is so good. I feel that’s one of many areas that you simply actually fear about. I imply, in 2016, we used to trace this, and you’d see individuals even have conversations with simply bots, and that was in 2016. They usually’re actually arguing or they’re selling their trigger, and so they’re having an interactive dialog, and it’s like there’s no person even behind the factor. So I feel it’s fairly straightforward for individuals to get wrapped up into that’s actual, or there’s a story that we need to get behind, however a variety of it may be pushed and has been pushed by different nation states,” Kurtz mentioned.

CrowdStrike’s Intelligence crew has invested a major period of time in understanding the nuances of what makes a convincing deep pretend and what path the know-how is shifting to achieve most affect on viewers.

Kurtz continued, “And what we’ve seen prior to now, we spent a variety of time researching this with our CrowdStrike intelligence crew, is it’s just a little bit like a pebble in a pond. Such as you’ll take a subject otherwise you’ll hear a subject, something associated to the geopolitical surroundings, and the pebble will get dropped within the pond, after which all these waves ripple out. And it’s this amplification that takes place.”

CrowdStrike is understood for its deep experience in AI and machine studying (ML) and its distinctive single-agent mannequin, which has confirmed efficient in driving its platform technique. With such deep experience within the firm, it’s comprehensible how its groups would experiment with deep pretend applied sciences.

“And if now, in 2024, with the power to create deepfakes, and a few of our inner guys have made some humorous spoof movies with me and it simply to indicate me how scary it’s, you might not inform that it was not me within the video. So I feel that’s one of many areas that I actually get involved about,” Kurtz mentioned. “There’s all the time concern about infrastructure and people form of issues. These areas, a variety of it’s nonetheless paper voting and the like. A few of it isn’t, however the way you create the false narrative to get individuals to do issues {that a} nation-state desires them to do, that’s the realm that actually issues me.”

Enterprises must step as much as the problem

Enterprises are operating the danger of losing the AI war in the event that they don’t keep at parity with attackers’ quick tempo of weaponizing AI for deepfake assaults and all different types of adversarial AI. Deepfakes have develop into so commonplace that the Department of Homeland Security has issued a information, Increasing Threats of Deepfake Identities.

Source link